INTRODUCTION

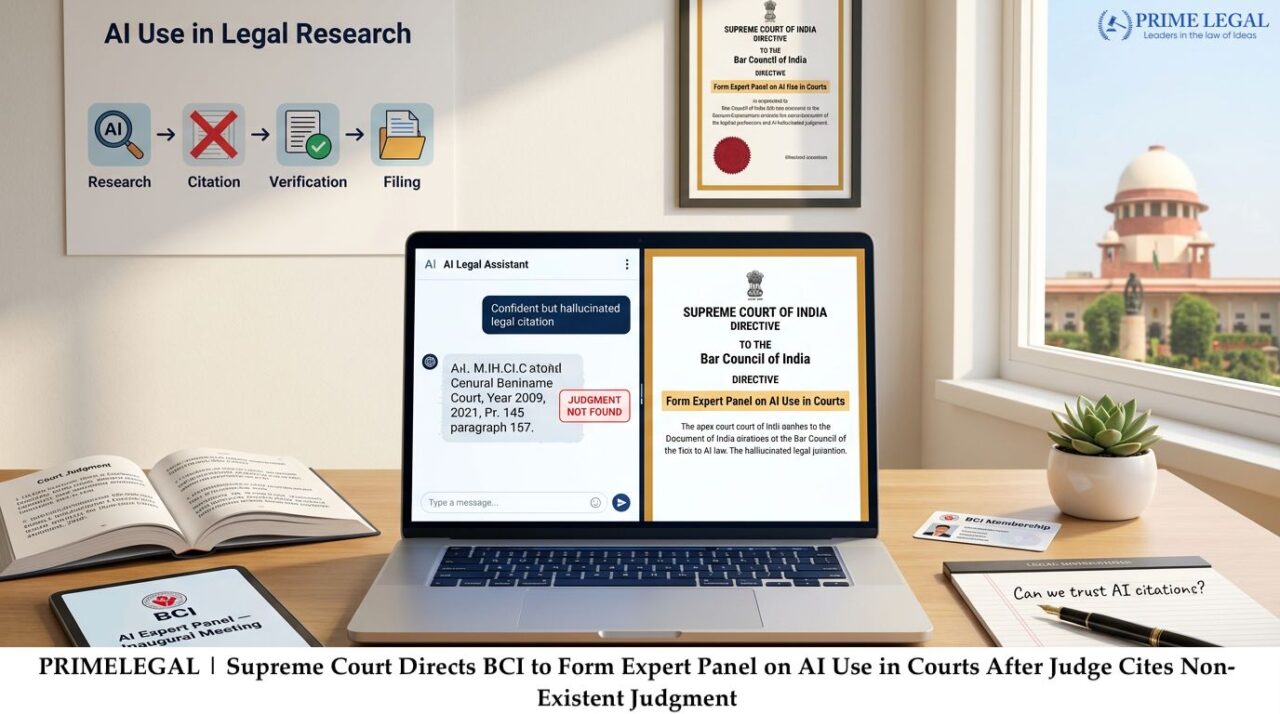

In a recent session, the Supreme Court of India spoke about the frequent ways that people wrongly use Artificial Intelligence during legal cases – this happened because a lower court used decisions that do not exist but were created by AI software. As a result a group of judges including Justice P.S. Narasimha besides Justice Alok Aradhe asked the Bar Council of India to form a team of experts. By forming this group, the council can study the dangers, moral problems and rules that are necessary when people use AI in a courtroom.

It is the view of the Court that Artificial Intelligence is helpful when individuals search for laws or want to work faster, but there is a risk that the honesty of legal processes and the trust of the public are harmed if people rely on AI materials without checking them.

BACKGROUND

To explain how this issue started, the Supreme Court received news that a trial court, in its case Gummadi Usha Rani v. Sure Mallikarjuna Rao used past legal examples that are not real, those false references were created by Artificial Intelligence tools. When AI systems make up information that looks like it is true, the process is often called “AI hallucination”.

During the hearing, the judges observed that the problem involves more than one event and creates questions about how people are held responsible, how lawyers behave and if work that uses Artificial Intelligence is dependable. For the court Senior Advocate Shyam Divan acted as a neutral advisor plus said that the Centre for Research besides Planning of the Supreme Court wrote a report about how the judiciary uses Artificial Intelligence and suggested ways to keep it safe.

It is clear to the judges that the Court does not disagree with new technology or the use of Artificial Intelligence when people practice law – but the judges emphasized that lawyers and courts are not able to trust material that machines create unless they check it themselves. There is a worry from the judges as India does not have its own, sovereign large language models and foreign Artificial Intelligence systems produce results that are not correct in India-specific legal cases that are important.

KEY POINTS

- To address this the Supreme Court spoke to the Bar Council of India but also asked them to form a group of experts, which includes individuals who know about technology and law, they can look at problems that happen when people use Artificial Intelligence in court.

- By looking at the history of the case, the problem started when a lower court used legal examples that do not exist because Artificial Intelligence tools created them.

- As the judges noted, Artificial Intelligence can create false information and fake references as well as those actions can change how judges decide cases or harm the legal rights of people if no one stops them.

- It is the position of the Court that it does not seek to prohibit how people use AI – but the Court seeks a system where people are responsible for their actions, check facts for accuracy and use the technology in a way that is reliable.

- By speaking to the Court, Senior Advocate Shyam Divan stated that the Centre for Research besides Planning of the Supreme Court has written a document about AI in the legal system. In this document there are suggested rules and ways to keep the process safe.

- To address those issues, an expert committee will study how to behave ethically, how to protect legal procedures plus how to define the duties of professionals. And this committee will look for ways to regulate how individuals use AI when they work on court cases.

- On May 26, 2026, the Court will consider this matter again.

RECENT DEVELOPMENTS

As the observations occur, people around the world are worried that AI creates legal information that is not true. In the United States and the Middle East, courts recently managed situations where lawyers used legal cases that AI made up. Due to similar issues, courts in India now experience more debates about if generative AI is dependable and how the law should control it. At the same time the judiciary uses new tools for translation, transcription but also research that rely on technology – but this situation shows that there are no official rules for how lawyers, litigants and judges use generative AI.

Reports indicate that the Supreme Court is currently examining the creation of broad rules for the use of Artificial Intelligence when lawyers and judges write legal documents. In those discussions, the Court stated that Artificial Intelligence is a tool that helps people work but it also noted that “responsibility must be fixed” because some data presented in court is not correct or genuine.

CONCLUSION

To conclude, the actions of the Supreme Court show that the judicial branch is careful and practical when it deals with new technologies. As the Court acknowledges that Artificial Intelligence can change how the system functions – making it faster and easier for people to use, it also insists that the tools must not lower the correctness of decisions or the responsibility of legal professionals.

By asking the Bar Council of India to form a group of experts, the Supreme Court started a formal discussion about how to use new tools while keeping protective rules in place. If the Council develops a new framework, this system will likely determine how the legal profession and court officials in India use Artificial Intelligence in the future.

“PRIME LEGAL is a National Award-winning law firm with over two decades of experience across diverse legal sectors. We are dedicated to setting the standard for legal excellence in civil, criminal, and family law.”

WRITTEN BY: SAMANA