INTRODUCTION:

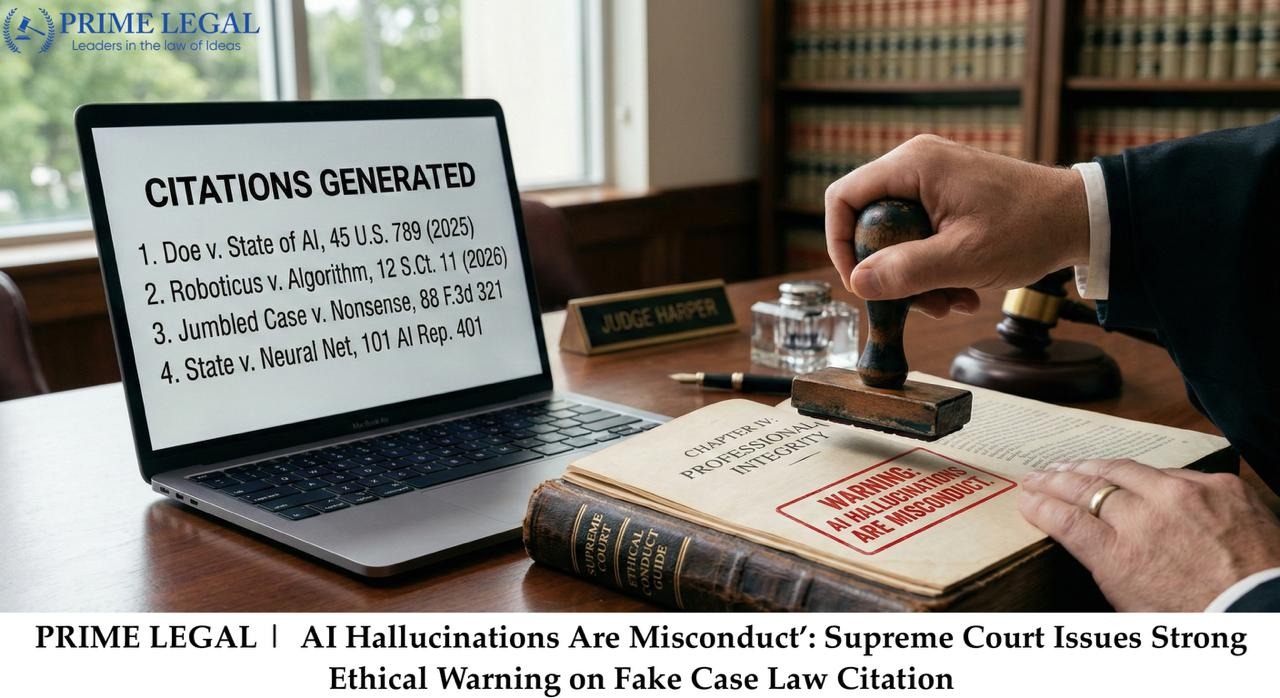

The observations being made by the Supreme Court of India in reliance on the citations or case laws given by the AI without cross examination resulted in the hallucinated formation of the citations or the case laws by the AI which has become a very dangerous source of information as the case being cited by the AI doesn’t even exist in real time. A recent case came across the Andhra Pradesh Trial Court where the trial judge relied on the case laws or citations given by the AI which were false and non-existent in nature and hence passed the judgment based on those citations. It raised a question that the AI has become a very reliant source of information but it producing the hallucinated citations; which doesn’t exist in real time has made the precedents given by the judges an unreliable ground of information and hence is an unfair pillar of justice in the society. The matter becoming an issue of institutional integrity; the bench has agreed to the need to look into the matter and to examine the accountability and the consequences which come along with the misuse of AI tools. Here the Supreme Court warned that relying on the fake AI generated judgments constitutes the judicial misconduct which comes with real consequences.

BACKGROUND:

A recent dispute in Andhra Pradesh Trial Court stated that the trial judge relied on the 4 AI generated precedents which were cited in the judgments of the Supreme Court and the High Court. When the losing party challenged this judgment it came across the fact that the precedents on which the trial judge relied were non-existent in real time as they were AI generated. The trial judge admitted in front of the High Court that she has used the AI for the first time and it was not a dishonest move. The High Court did not quash the order as the facts and principles were applied correctly and as there was a genuine mistake not an intended one. But the Supreme Court took this in a stricter way and warned the other courts and judges to not to rely fully on the AI generated facts without cross-examination.

KEY POINTS:

- The bench of Justices P.S. Narasimha and Alok Aradhe characterized the “misconduct” of the AI generated facts and stated that this issue can’t be set aside casually and pointed out to the threat it may possess. He stated that merely relying on the AI generated facts can be a threat to justice and hence should not be taken lightly. The court emphasized on the misconduct of the AI reliance and held that it comes with the followed consequences of justice getting hindered. The Supreme Court held that as the important aspect to focus on and to resolve quickly as this would be dangerous if left unresolved.

- The court through this case discovered the institutional concern is much wider in nature. It’s not the only case in which justice got hampered with the use of AI generated facts; however there exists a chance regarding a lot of scenarios where AI has played a major role in finding the information which might be false with the lack of proper cross-examination and this has raised a serious issue in the adjudicatory sector of the country where justice is the main core element and relying too much on the AI generated facts without proper cross-examination can lead to hindrance in justice.

- The court also mentioned the accountability of the judicial officers to be more careful in relying over the AI generated facts and precedents without proper check. They would be held accountable for delivering a judgment based on the AI generated precedents without checking it. A person should not be left without justice because of the mistake of the judge.

- The Bombay High Court has imposed monetary costs on the litigants for submitting the arguments and reasoning which are false in nature, or the data from the AI tools without proper check can result in the accountability of the litigants. The court has even stated that the AI generated facts and reasoning without a proper check is a waste of judicial time. The point being stated is that AI is helpful but relying completely on it without a proper factual reasoning can be dangerous.

- The court stated the liabilities of the lawyers and advocates along with the judges. It has stated that the accountability ranges from the monetary fine to a strong judicial action. It talks about the seriousness of the AI hallucinations empowering the legal arena for better information and hence should be dealt carefully.

RECENT DEVELOPMENTS:

After this case; the Supreme Court stated that this AI hallucination is a serious issue and should be viewed individually with all the judgments related to the precedents which are the result of the AI hallucinations. The court has framed this issue as a direct threat to the adjudicatory process and found the need for a better clarification and guidelines for its usage and reliance. And hence courts are working for a better reliability on AI.

CONCLUSION:

It was being concluded that how AI can be a threat to society if not dealt properly. How badly it can affect the core area of justice of the society. It’s been sated by the court the need for a better guidelines for the usage of AI. It can be really helpful in yielding better results if used with caution and responsibility.

“PRIME LEGAL is a National Award-winning law firm with over two decades of experience across diverse legal sectors. We are dedicated to setting the standard for legal excellence in civil, criminal, and family law.”

WRITTEN BY: MEENAKSHI DANGI